The internet has always had its seasons. One month it is action figures; the next it is cinematic headshots; and now, in early 2026, it is caricatures. Scroll through LinkedIn, Instagram, or WhatsApp and you will see them everywhere: stylised portraits of hairdressers surrounded by blow-dryers, chefs framed by copper pans, brokers standing confidently before cartoon skylines of glass and steel.

The prompt is deceptively simple: create a caricature of me and my job based on everything you know about me.

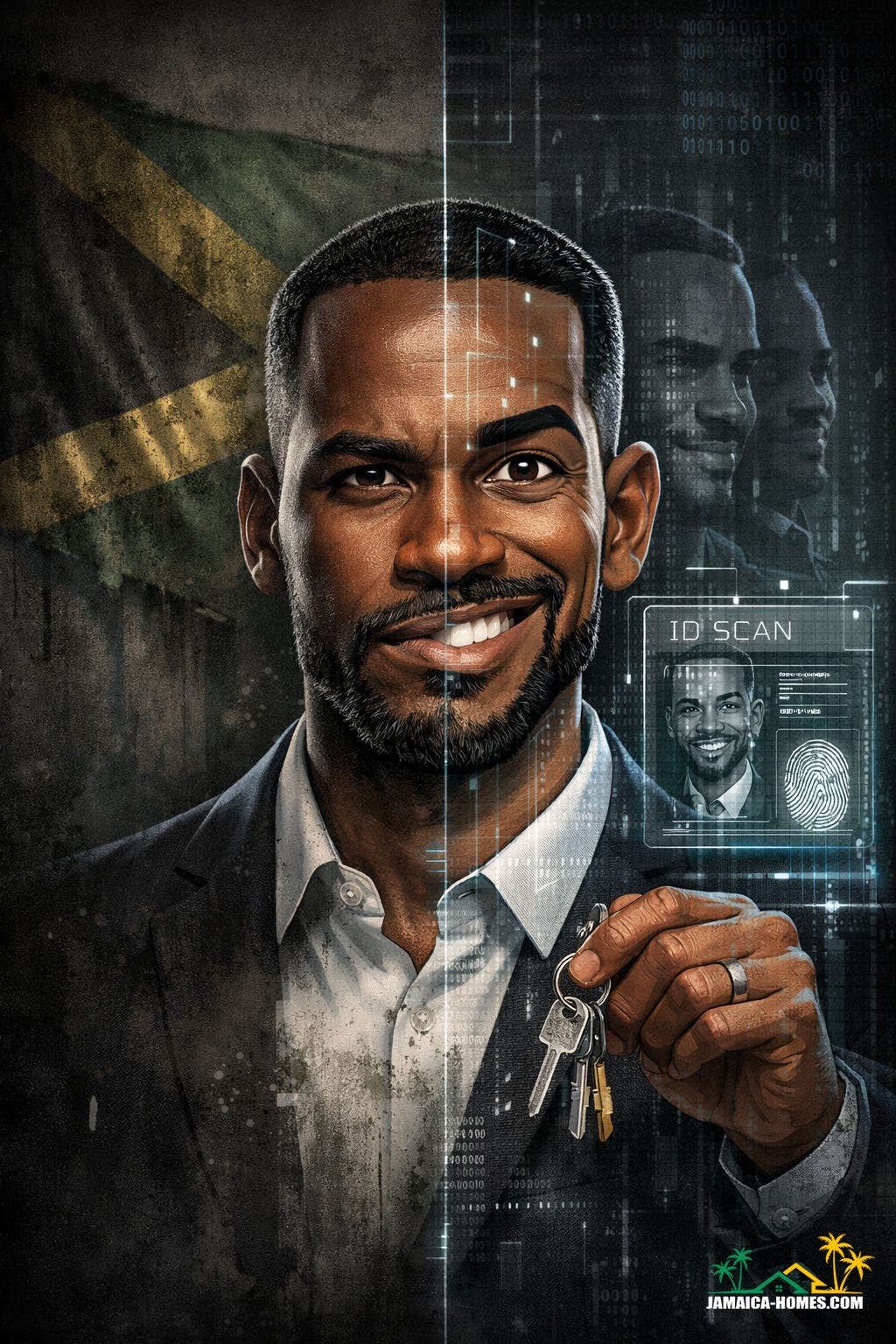

For real estate professionals in particular, the trend has landed with force. Agents are flooding the internet with polished, playful, AI-generated versions of themselves — sharper jawlines, brighter suits, exaggerated smiles, miniature cranes and condominiums rising in the background. It feels modern. It feels creative. It feels, on the surface, harmless.

But in a world where deepfake fraud is now described by researchers as operating at industrial scale, the cheerful cartoon deserves a more serious conversation.

When Fun Meets Infrastructure

What makes this trend different from older caricature apps is not simply the art style. It is the layer of context. The system does not just transform a selfie into a cartoon; it draws on chat history, job descriptions, habits, preferences, and sometimes multiple uploaded images to create something that feels deeply personal.

That personalisation is precisely what delights users. It can feel as if the system “understands” them — their industry, their personality, their daily rhythm. For agents competing in crowded property markets, that sense of distinction matters. A strong image builds memorability. Memorability builds brand equity. In an attention economy, visual differentiation is an asset.

Yet the same mechanism that creates that intimacy also expands exposure.

Every prompt sits on top of immense infrastructure. Artificial intelligence is not an abstract cloud; it is rows of servers, cooling systems, fibre cables, and energy grids. Alphabet, the parent company of Google, recently signalled the scale of this investment by issuing bonds that mature in 100 years — part of a reported $20 billion fundraising effort to continue building out AI infrastructure, with demand from lenders far exceeding the offer. The appetite for AI is not slowing. The capital behind it suggests permanence.

AI is not going anywhere soon. That is not speculation. It is structural.

And when a technology becomes structural, the way we use it becomes consequential.

Your Face Is Not Just an Image

A photograph is not only a photograph. It is geometry. It is depth. It is pattern recognition data. In technical terms, facial images are biometric identifiers — unique markers that can be used in verification, authentication and surveillance systems.

When a professional uploads ten or twenty well-lit photographs from different angles, they are not merely “making a cartoon.” They are providing a dataset.

In isolation, that may appear insignificant. In aggregation, it is powerful.

Deepfake voice cloning is already highly convincing. Video manipulation continues to improve. Researchers tracking AI misuse report that fraud, scams and targeted impersonation now represent a dominant category of AI-related incidents. The cost of entry has fallen sharply. Tools are widely accessible. Production quality is rising.

For real estate professionals, the implications are not theoretical. This is an industry built on trust and urgency. Deposits move quickly. Instructions are sometimes delivered by phone, email or messaging apps. A convincing synthetic video call, a cloned voice message, or a doctored endorsement can create confusion in minutes.

Dean Jones:

“We need to be clear about the difference between branding and exposure. An AI caricature can be clever marketing. It can humanise you. But if you are uploading high-resolution, multi-angle photographs and layering in detailed personal information, you are expanding your attack surface. In real estate, where transactions are time-sensitive and high-value, that matters. Your likeness is an asset — and assets require stewardship. If deepfake fraud is becoming industrial, then professionals must behave professionally with their own data.”

The risk is not that every avatar platform is malicious. The risk is that once your digital likeness exists in high fidelity, it becomes easier to replicate elsewhere.

The Illusion of “Free”

There is another dimension to this trend that rarely makes the caption beneath the smiling portrait: cost.

Each prompt draws power. Academic research suggests that a single AI query can use significantly more electricity than a conventional web search. Data centres require vast amounts of water for cooling. Multiply that by viral participation — tens of thousands of prompts in a matter of days — and the footprint grows.

At the same time, the economics of AI are shifting. Operating advanced models is expensive. Infrastructure must be financed, maintained, secured. Energy prices in regions hosting large data centres have risen over recent years, prompting utilities to invest heavily in new capacity. Providers are increasingly exploring subscription tiers and paid access, not simply as revenue streams but as mechanisms to manage demand.

The caricature may feel free. The ecosystem behind it is not.

This is not an argument against innovation. It is an argument for maturity. When a technology becomes foundational to global infrastructure — financed with century-long bonds and multi-billion-dollar commitments — casual use acquires systemic implications.

Oversharing in the Age of Synthesis

Cybersecurity specialists have warned that the normalisation of detailed personal prompts can accelerate identity misuse. When users invite a system to summarise “everything you know about me,” they often provide employment context, behavioural patterns, preferences, family details, sometimes even location clues.

In isolation, these fragments appear harmless. Combined, they form a profile.

Large language models are designed to synthesise context. That is their strength. But synthesis can also assist malicious actors in crafting highly personalised social engineering attempts.

Real estate professionals, who regularly publish listings, schedules and achievements online, are already visible. Adding richly contextualised AI-generated portraits may deepen that visibility in ways that are not immediately obvious.

Dean Jones:

“The creative instinct in Jamaica is powerful. We turn technology into expression quickly. That is something to celebrate. But discipline must accompany creativity. If you would not email your passport copy to an unknown address, you should think twice about uploading the cleanest images of your face alongside detailed professional narratives. The goal is not fear. The goal is foresight. Trust is the currency of our industry, and once trust is compromised — even through impersonation — the repair is slow.”

A Real Estate Lens on Risk

Why does this conversation resonate so strongly in property markets?

Because impersonation has leverage here. A synthetic message requesting a “small holding deposit” can unlock large sums. A fabricated video endorsing a development can influence buyers. A convincingly altered headshot attached to a fake listing can misdirect attention.

Even recruitment is not immune. Cases have emerged globally in which candidates appearing via video interviews were later suspected to be AI-generated composites. The technology is evolving faster than instinct.

In that environment, the flood of AI caricatures does more than decorate timelines. It normalises synthetic representation.

Normalisation reduces suspicion.

And reduced suspicion benefits those who intend harm.

Toward Responsible Participation

None of this means professionals must abstain entirely from experimentation. Artificial intelligence is reshaping marketing, research, client communication and operational efficiency. To ignore it would be imprudent.

But participation should be intentional.

Consider limiting the resolution and variety of uploaded images. Avoid sharing sensitive documents or private identifiers in prompts. Reinforce public anti-fraud messaging — clearly state that payment instructions will never be delivered solely via social media or messaging apps. Establish internal verification protocols for financial transactions. Treat your digital likeness with the same seriousness you treat client funds.

Most importantly, recognise that virality is not validation. A trend can be joyful and risky at the same time.

The caricature boom captures something authentic about this moment in technology. It demonstrates how seamlessly generative AI can weave identity, profession and narrative into a single image. It also illustrates how quickly we can become comfortable externalising pieces of ourselves to systems we do not fully control.

Artificial intelligence will continue to expand. Investment levels indicate as much. Infrastructure is being built for decades, not seasons. The question is not whether AI will shape professional life. It is whether professionals will shape their own boundaries within it.

The smiling cartoon on your profile may look harmless.

But in a digital economy where likeness can be cloned, context can be synthesised, and trust can be weaponised, even a caricature deserves careful thought.